That is a very dangerous question for a leader to ask when evaluating options. Yet it is one I hear far too often in the healthcare realm. It encapsulates a rejection of innovation, evolution and learning all in one terse, often rhetorical, question.

That is a very dangerous question for a leader to ask when evaluating options. Yet it is one I hear far too often in the healthcare realm. It encapsulates a rejection of innovation, evolution and learning all in one terse, often rhetorical, question.

A common context for this question, often prefixed by, “If this is so great…,” is when discussing semantics and semantic technology. Although these concepts are not new to some industries, such as media, they are foreign in many healthcare organizations. Yet we know that healthcare payers and providers alike struggle with massive data integration and data analytics challenges just like media conglomerates.

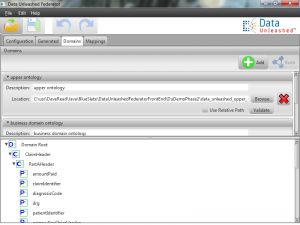

The needs to: combine siloed information; drive an analytics mindset throughout an organization; and support the flexibility of a constantly changing IT environment are common in large healthcare organizations. Repeated attempts by organizations to meet these needs betray a lack of consensus around how to best achieve a valuable result.

Further, the implication that how most organizations solve a problem is optimal ignores the fact that best practices must change over time. The best way to solve a problem last year may not be the same this year. The healthcare industry is changing, the physical world of servers, networks, disk drives, memory is changing, and the expectations of members are changing. What was infeasible years ago becomes commonplace. Relational databases were all but unworkable in the 1970s due to a lack of experienced DBAs, slow disk drives, slow processors and limited memory.

In the same way, semantic formalization and graph databases were too new and limited to deal with large data sets until people gained expertise with ontologies while system hardware benefitted from another generation of Moore’s law. In the face of ongoing innovation, the question leaders should ask when approaching a challenge is, “What advancements have been made since the last time we looked at this problem?”

Leadership requires leading, not following. Leaders mentor their organizations through change in order to reach new levels of success. Leadership is based on learning, open-mindedness, creativity and risk-taking. The question, “Why isn’t everybody doing it?” is the antithesis of leadership and has no place there. In fact, if everybody is doing something, a leader would be better off asking, “How do we get ahead of what everybody is doing?”

Leadership requires leading, not following. Leaders mentor their organizations through change in order to reach new levels of success. Leadership is based on learning, open-mindedness, creativity and risk-taking. The question, “Why isn’t everybody doing it?” is the antithesis of leadership and has no place there. In fact, if everybody is doing something, a leader would be better off asking, “How do we get ahead of what everybody is doing?”

Leaders must be on the forefront of pushing for better, faster, cheaper. Questioning the status quo, looking for new opportunities, seeking to leapfrog the competition, those are key foci for leadership.

As a leader, the next time you find yourself limiting your willingness to explore an option because everybody isn’t doing it, keep in mind that calculators, computers, automobiles, elevators, white boards, LED light bulbs, Google maps, telephones, the Internet, 3-D printing, open heart surgery, and many more concepts that are accepted or gaining traction, had a day when only one person or organization was “doing it.” Challenge yourself and your organization to find new options, new best practices and new paradigms for advancing your strategy and goals.